Surviving the JavaScript ecosystem updates of 2018

Tuesday, December 18th, 2018 | Programming

When I first launched the WAM website it was built using a reasonably straightforward stack of React + Babel + Webpack. That was a year or two ago and a lot has happened since then. Notably, there have also been some JS security issues, too, so we’re going to start upgrading the stack on a regular basis.

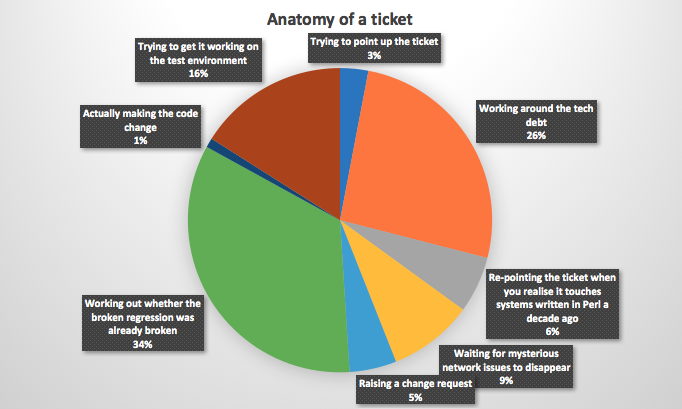

As anyone who has worked with JavaScript knows, though, that is a massive pain in the ass. Here are some notes on the upgrade process.

Upgrading Gulp

Gulp has now moved to version 4. First off, you need to uninstall any previous versions of Gulp. You then install the Guli CLI globally, and the latest version of Gulp locally.

npm install -g gulp-cli

This was a massive hassle. The uninstalls did not work and I had to manually go through the file system to get rid of the thing.

There were also some changes to the config itself. Any paths such as dest('') had to be changed to dest('.'), and all of the functions that defined the Gulp tasks had to now return, rather than just being called.

return gulp.task('name', () => { });

The way tasks were run within them has also changed. So, anywhere that used tp be ['sass'], for example, now needs to be:

gulp.series('sass')

Ugrading Node

You’ll want to upgrade Node to the latest LTS version. This includes editing the engine in your package.json file, especially if you are using Heroku.

Upgrading React

This was as simple as updating the versions in package.json and running an update. However, I did run into a problem with the React CSS Transition group, where I had to update to a drop in replacement.

npm install --save react-transition-group@1.x

npm remove react-addons-css-transition-group

And then change the import statement to import from:

react-transition-group/CSSTransitionGroup

Upgrading Babel

This was mostly a case of bumping the version numbers, but I also had to update my .babelrc file. I think the spread operator was previously a different package, and I had to change the name.

{

"presets": ["@babel/react", "@babel/preset-env"],

"plugins": ["@babel/plugin-proposal-object-rest-spread"]

}

Upgrading Webpack

Again, this started with bumping all of the versions. There were also some config changes. Most notably, Webpack now has an environment property.

config.mode = 'production';

It has also changed the way that UglifyJS is brought in if you’re using that to minify your code. You’ll need to install the new package.

npm install uglifyjs-webpack-plugin webpack-cli --save-dev

And then update your Webpack config, too.

config.optimization = {

minimizer: [

new UglifyJsPlugin({

uglifyOptions: {

output: {

comments: false

}

}

})

]

}

Hopefully this will come in handy if you are upgrading a similar stack and wondering why everything has exploded.

When I first launched the WAM website it was built using a reasonably straightforward stack of React + Babel + Webpack. That was a year or two ago and a lot has happened since then. Notably, there have also been some JS security issues, too, so we’re going to start upgrading the stack on a regular basis.

As anyone who has worked with JavaScript knows, though, that is a massive pain in the ass. Here are some notes on the upgrade process.

Upgrading Gulp

Gulp has now moved to version 4. First off, you need to uninstall any previous versions of Gulp. You then install the Guli CLI globally, and the latest version of Gulp locally.

npm install -g gulp-cli

This was a massive hassle. The uninstalls did not work and I had to manually go through the file system to get rid of the thing.

There were also some changes to the config itself. Any paths such as dest('') had to be changed to dest('.'), and all of the functions that defined the Gulp tasks had to now return, rather than just being called.

return gulp.task('name', () => { });

The way tasks were run within them has also changed. So, anywhere that used tp be ['sass'], for example, now needs to be:

gulp.series('sass')

Ugrading Node

You’ll want to upgrade Node to the latest LTS version. This includes editing the engine in your package.json file, especially if you are using Heroku.

Upgrading React

This was as simple as updating the versions in package.json and running an update. However, I did run into a problem with the React CSS Transition group, where I had to update to a drop in replacement.

npm install --save react-transition-group@1.x npm remove react-addons-css-transition-group

And then change the import statement to import from:

react-transition-group/CSSTransitionGroup

Upgrading Babel

This was mostly a case of bumping the version numbers, but I also had to update my .babelrc file. I think the spread operator was previously a different package, and I had to change the name.

{

"presets": ["@babel/react", "@babel/preset-env"],

"plugins": ["@babel/plugin-proposal-object-rest-spread"]

}

Upgrading Webpack

Again, this started with bumping all of the versions. There were also some config changes. Most notably, Webpack now has an environment property.

config.mode = 'production';

It has also changed the way that UglifyJS is brought in if you’re using that to minify your code. You’ll need to install the new package.

npm install uglifyjs-webpack-plugin webpack-cli --save-dev

And then update your Webpack config, too.

config.optimization = {

minimizer: [

new UglifyJsPlugin({

uglifyOptions: {

output: {

comments: false

}

}

})

]

}

Hopefully this will come in handy if you are upgrading a similar stack and wondering why everything has exploded.